Why you should consider moving away from a sequential towards an iterative approach for data science projects.

During finals week of my freshman year of college, my peers and I were frantically working on final papers and projects. One of my computer science professors came over to us and said, “remember students, premature optimization is the root of all evil.” At the time, I was shocked by what I considered an extreme point of view – and a rather presumptuous statement. However, after a few years of experience, I have come to better understand what my professor was trying to communicate and I now appreciate the benefits of an iterative approach.

What is Premature Optimization?

Popularized by Donald Knuth in The Art of Computer Programming, the concept of premature optimization refers to not fine tuning and perfecting something that is not actually needed. This idea is also a key to building successful data science projects. It encourages the concepts of starting simple and iterating, or repeating with small improvements, while moving towards complete optimization.

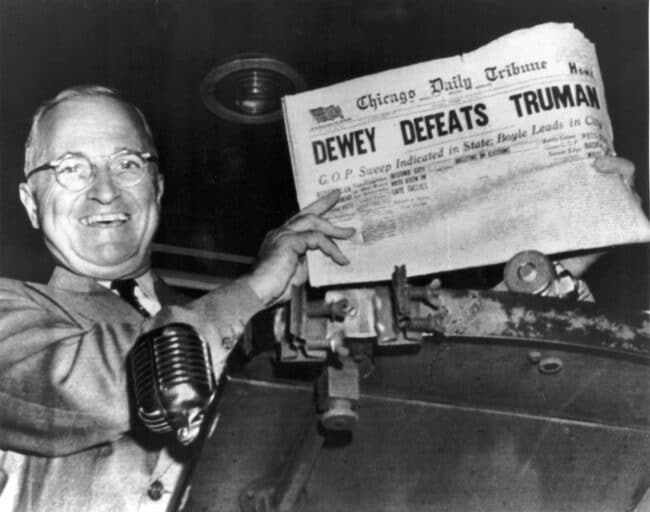

Some people believe that data science projects should start complex and that each step in the process must be fully designed and optimized before moving on to the next. This approach can sound extremely appealing and seems to emphasize long-term success due to the focus on optimization. However, in one of his Stanford lectures on applying machine learning, Andrew Ng discusses that this sequential approach (that he refers to as the careful design approach) increases risk of premature optimization – and thus often takes more time, uses more resources, and results in a product that does not meet the business needs.

What is Iterative Development?

An iterative process can be defined as one in which the initial version of a product or project is delivered quickly, tested to identify where improvements are needed, changes are made, and the process is repeated incrementally. This iterative or start small approach, one that Andrew Ng calls the build-and-fix approach, focuses on quick adaptation and encourages modifications to meet the business needs. For instance, a data science approach or machine learning model would be built quickly at first and then improved incrementally over time.

Why choose an iterative approach for data science projects?

To save time and money and avoid unnecessary work

Instead of making sure each step is perfect before moving on to the next, a better approach is to create a minimal viable product that gets the job done. The benefit of this is that often it is not very clear up front what the easy or difficult parts of a system are to build or what you should spend time focusing on. The iterative approach helps here by revealing the weak links and parts that break up front. It is better to find out early what works and what does not instead of finding that out closer to the project deadline. With this approach, you can stay on time and on budget without sacrificing quality.

To experience greater success and collaboration

In Effective Data Storytelling: How to Drive Change with Data, Narrative and Visuals, Brent Dykes explains the importance of this iterative approach when writing about post-war Japanese manufacturers that encouraged employees to introduce small improvements to their factories over time. Dykes writes, “Eventually, the culmination of these small process refinements over the years helped Japanese firms such as Toyota and Sony gain a major competitive advantage in terms of product quality and manufacturing efficiency.” These small, incremental improvements with an iterative approach make all the difference.

If you spend too much time working on tiny efficiencies here and there in a sequential approach, you may run the risk of missing the big picture. You may also spend too much time working on something that will be outdated by the time it is released since business needs shift frequently. With an iterative approach, you can quickly respond to change as you are constantly testing, evaluating, and gathering feedback. This feedback may come from clients, domain experts, and others on your team resulting in a highly communicative and collaborative project. In this case, the finished product will be better aligned with the goals and needs of the business.

Example of an Iterative Process

In the end, my professor was right about premature optimization. When writing my final papers, I didn’t start by writing and perfecting the introduction. Instead, I created a rough draft with bullet points for each paragraph or section. Then l went back in and started turning those bullet points into complete thoughts. Once I had lots of text, I started adding in references and more detail. At this point, I would get feedback from professors and peers. And slowly, I turned my bare bones draft into a final paper that was well written and on time.

For your next data science project, starting simple and iterating to complete optimization could bring you more wins. You’ll save time and money, have better collaboration, and greater success.

Need help with a data project? We specialize in delivering custom solutions, from concept to implementation. Contact us to discover what’s possible.