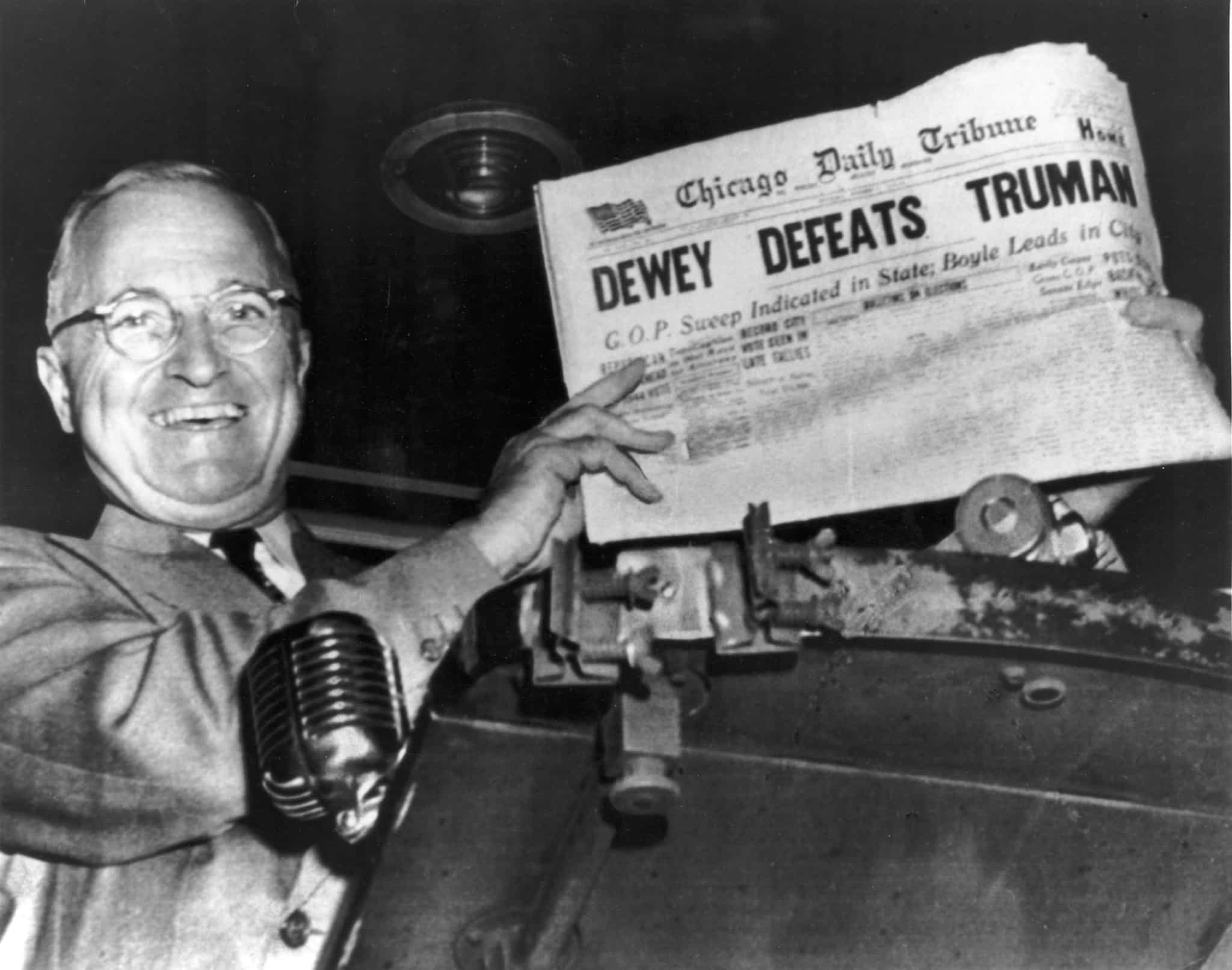

In the annals of American history, there are few blunders as iconic as the data validation blunder of the 1948 U.S. presidential election. This is a cautionary tale from the early days of data analytics, forever immortalized on the front page of the Chicago Daily Tribune with the infamous headline, “DEWEY DEFEATS TRUMAN.”

The 1948 U.S. Election: A Cautionary Tale of Confirmation Bias in Data Analysis

Following the death of President Franklin D. Roosevelt in 1945, then Vice President Harry S. Truman faced the daunting task of leading the country through the end of the Second World War. Though he was ultimately successful, victory in Japan soon gave way to economic and cultural whiplash as the United States transitioned to peacetime.

Party infighting, rising inflation, and labor unrest doomed Truman’s Democratic Party in the 1946 midterm elections where they surrendered control of both chambers of Congress.1 Truman, unlike his historically popular predecessor2, was unpopular even amongst the southerners in his own party, leading nearly every political pundit to agree that he would not survive his re-election.

However, on election night 1948, the votes were counted and a different story emerged. Against all expectations, Truman secured a landslide victory over his Republican opponent, Governor Thomas Dewey. Truman won the Electoral College 303 to 189!

This extraordinary miscalculation shook the emerging polling industry to the core, causing the public to question whether polling organizations like Gallup, Roper, and Crossley were needed at all.

What Went Wrong?

First of all, public polling concluded just two weeks before the election and undecided voters “broke heavily for Truman.”3 As George Gallup himself stated, “We did not get a cross section of the population. We did not allow for the fact that people change their minds.”

At the time, telephone surveys were the dominant method of public polling. While this system was simple and cost-efficient, it failed to accurately represent the population since, unlike modern day, owning a telephone in the 1940s was a luxury. Therefore, the random samples overrepresented wealthier, urban households at the expense of lower-income, rural households.

Though the polling firms sought to address this bias by securing certain quotas from different groups based on gender, age, and geography, the Social Science Research Council determined that these quotas were skewed to more educated people, creating even more bias.3

How to Prevent Confirmation Bias

The polling firms of 1948 learned a difficult lesson that far too many companies are still unaware of today: numbers may be neutral, but their interpretations are not.

When analyzing data, we must check our assumptions both rigorously and frequently to avoid confirmation bias. This becomes especially important in certain industries where the prevalence of certain viewpoints poses the risk of serious confirmation bias.

To ensure reliable outcomes, it’s important that data scientists validate assumptions through best practices like:

- Periodic review – Consider incorporating quarterly reviews of your data pipelines. By routinely identifying inconsistencies, you ensure your methodologies remain valid. Plus, by promoting a culture of accuracy, the burden on your quality assurance team is alleviated as well.

- Iterative testing – Keeping your data models aligned with market factors is far easier to do when your changes are iterative. Therefore, it’s imperative that your testing is routine and cyclical, so that feedback can further refine your predictions. Testing your models “as-needed” may appear to save money in the short-term, but it bears the risks of making your models short-sighted as well.

- Qualitative assessments – Given the rise of natural language processing methodologies into the data industry, consider incorporating non-numerical data into your model, such as interviews, focus groups, and observational studies. These resources can further underscore trends and prevailing patterns and apply nuance to multi-faceted issues where your model might lack the same predictive power.

Furthermore, there are several best practices to minimize the risks of sampling bias:

- Increasing sample sizes – Larger sample sizes nearly always ensure better representation in your data, which reduces error margins and improves the reliability of your testing.

- Standardizing data collection pipelines – While not explicitly mentioned in the 1948 election story, creating and enforcing standards for data collection has become an emerging issue for modern day companies. Even with large sample sizes, differences in procedures at the local level can produce wild results in the aggregate and are often far harder to retroactively track in analyses. Standardization ensures consistency in this regard, which not only makes your findings more reliable, but also makes it easier to validate and compare your results over time.

- Collaborating with field experts – Even with best practices, it can always be helpful to seek the help of professionals whose expertise and knowledge base is deep enough to recognize and correct methodologies and interpretations at every stage. When engaged, they can provide the invaluable insights that turn your data’s potential into a treasure trove.

Invest in Data Validation to Overcome Confirmation Bias

In a business era where data is as abundant as time is scarce, the allure of shortcuts is tempting. However, as the 1948 election illustrates, the cost of making business decisions based on faulty analytics can far exceed the resources spent on thorough validation. Seen in this light, investing in data validation is not just a best practice; it’s an insurance policy that should not be overlooked. Reach out to InfoWorks’ data science experts to get started.

1. ‘Dewey Defeats Truman’: The Election Upset Behind the Photo”. https://www.history.com/news/dewey-defeats-truman-election-headline-gaffe

2. “Ranked: Presidential Approval Ratings from Worst to First”. https://www.benzinga.com/general/politics/14/11/5030580/presidential-approval-ratings-ranked-from-first-to-worst

3. “A HISTORY OF POLLING – 1948 – DEWEY DEFEATS TRUMAN”. https://www.publiusthegeek.com/2018/07/a-history-of-polling-1948-dewey-defeats.html